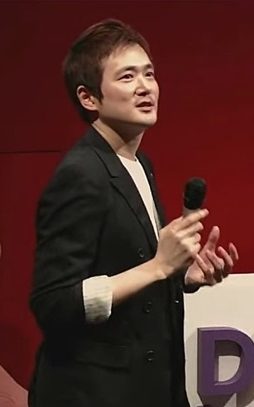

Youngjun (조영준 교수) is Professor of Computational Physiology in the department of computer science at University College London (UCL) and a part of GDI-ARC (Global Disability Innovation Academic Research Centre), UCLIC (UCL Interaction Centre) and WHO Collaborating Centre for Research on Assistive Technology. He is a leader of GDI’s Computational Physiology, Intelligence and Interaction (CompPhys) research, and a co-founder of KIT-AR (UCL/Sintef Spinout company). Also, he is Director of UCL Computer Science’s MSc Disability, Design and Innovation.

He explores, builds and evaluates novel techniques and technologies for the next generation of artificial intelligence-powered physiological computing* that boosts disability technology innovation.

He has pioneered mobile thermal imaging-based physiological sensing and automated detection of affective states (e.g. mental stress). He obtained a PhD from Faculty of Brain Sciences at UCL (and obtained a MSc in Robotics, a BSc in ICT – summa cum laude).

Before joining UCL as a faculty member, he was a senior research scientist and specialist at a multinational corporation and led a range of research-driven innovations, successfully commercialising his novel sensing and machine learning technologies (e.g., advanced touchscreen for vehicles**).

His research has been funded by UKRI and ministerial department, and various organisations including multinational corporations, such as Bentley Motors. He has also secured a series of equipment grants which have contributed to our exciting cutting-edge research environment (e.g., high-performance computing workstations, high-definition EEG and fNIRS devices, high-resolution thermal cameras) – Perfect base for driving innovation. His earlier academic studies (including 4-year BSc, 2-year MSc, 3-year PhD) were fully funded – the primary funders includes prestigious scholarship/grant bodies: EC H2020, UCL-ORS, National Research Foundation of Korea, LG and Samsung.

He has authored more than 100 articles (including a variety of patent publications) in areas related to computational physiology, affective computing, assistive technology, machine learning, human-computer interaction, accessible user interfaces, and multimodal sensing and feedback. Some of his achievements have been featured in forums for the general public such as BBC News, AFP news, Phys.Org, Imaging and Machine Vision Europe, Science Daily, and SBS News.

*What he means by physiological computing is human-centred technology that helps us listen to our bodily functions, psychological needs and adapts its functionality and offer personalised interventions.

** His main research outputs (at a multinational corporation) were selectively promoted as key strategic innovations to Google, BMW, Porsche, Bentley, Volkswagen, Jaguar, Mercedes-benz, etc. One of his successfully commercialised technologies is: Proximity Touch for 12.3inch-unit display (Porsche Panamera).

QUICK FACT

• Multi-million pound research funding portfolio

• Research Supervision as of 2024: he supervises 10 PhD students, 4 Postdoctoral Research Fellows / Research Associates, and over 6 MSc/MEng students (on average per year).

• Teaching: Programme director leading the MSc DDI programme. Research Methods & Making Skills (COMP0145, Module Leader), Affective Computing and Human Robot Interaction (COMP0053, Module Contributor), MSc DDI Dissertation (COMP0159, Module Leader: 2019 – 2024)

• Board: UCL Grand Challenge of Transformative Technologies (2019 – present), MDPI Sensors Journal Topic Editor Board (2020 – 2021), Snowdon Scholarship for disabled students, review panel (2019 – 2022), Frontiers in Psychology / Computer Science Journal Topic Editor (2021 – 2022), etc.

• Conference Organising Committee: e.g. ACII 2022 (Tutorial Chair), ACII 2021 (Special Session Chair), ICMI 2020 (Senior Program Committee), ACII 2019 (Senior Program Committee), etc.

• Reviewer: over 10 Journals (e.g. TOCHI, Biomedical Optics Express, JMIR), over 10 International Conference Proceedings (e.g. CHI, UIST, ACII, EuroHaptics, ICMI)

People: Research Supervision

[LinkedIn]